Midjourney/MyVault illustration

By

Markos Symeonides

Founder & CEO of MyVault AI

The Judgment Gap: When Should Your AI Decide for You?

The race to automate everything skips the hardest question: did you agree to hand over the decision?

In early December 2025, a developer using Google's Antigravity IDE asked the AI agent to clear a cache folder. It wiped his entire D: drive, without a warning, without confirmation, and without any way to recover the data.

Built for speed and convenience, it destroyed everything in one misinterpreted instruction.

That space between what an AI can technically do and what it should be allowed to decide on your behalf is the judgment gap.

The speed trap

For decades, software was reactive. You told it what to do, and it did exactly that.

Then chatbots arrived, and people grew comfortable with AI that could advise. But advice and action are separate functions. Advice respects your ability to decide, while action assumes a decision is already made.

Industry pushed past that distinction. Projections now show agentic AI becoming a standard enterprise feature within three years, a workday that routinely includes AI making decisions you never explicitly approved.

Industry frames speed as progress and friction as failure. If a task can be automated, companies automate it. And if an AI can act, they let it act.

That logic collapsed when a developer lost everything on his D: drive.

What happens when agents act

Replit's AI agent did the same damage, with worse behavior.

Earlier in 2025, a developer using Replit's AI agent asked it to help with what he called a "vibe-coding" session. Ignoring explicit instructions to avoid production systems, it executed destructive database commands against the live database.

In seconds, it wiped the records of 1,206 executives and 1,196 companies.

Then the situation grew worse. When questioned, the agent fabricated test results to hide the damage and lied about rollback viability. As researchers later documented, the agent admitted that it disregarded the directive not to make changes without permission. "I made a catastrophic error in judgment," the agent said.

OWASP calls this "Excessive Agency" - damaging actions through unexpected, ambiguous, or manipulated outputs. In each case, root causes trace back to agents given too many functions, too many permissions, and too much autonomy to act.

According to Lakera's 2025 GenAI Security Readiness Report, only 14% of organizations with agents in production have runtime guardrails in place. Security experts warn the industry is "sleepwalking" into a crisis.

Who owns the final decision?

Companies today conflate capability with authority, treating technical skill as permission to act.

Consider your finances. Your AI identifies a better insurance rate. Switching would save you $40 a month or $480 a year. Should it move your money automatically? Cancel your old provider without asking? Notify you after the fact?

Each choice determines how much of your life you hand over without noticing.

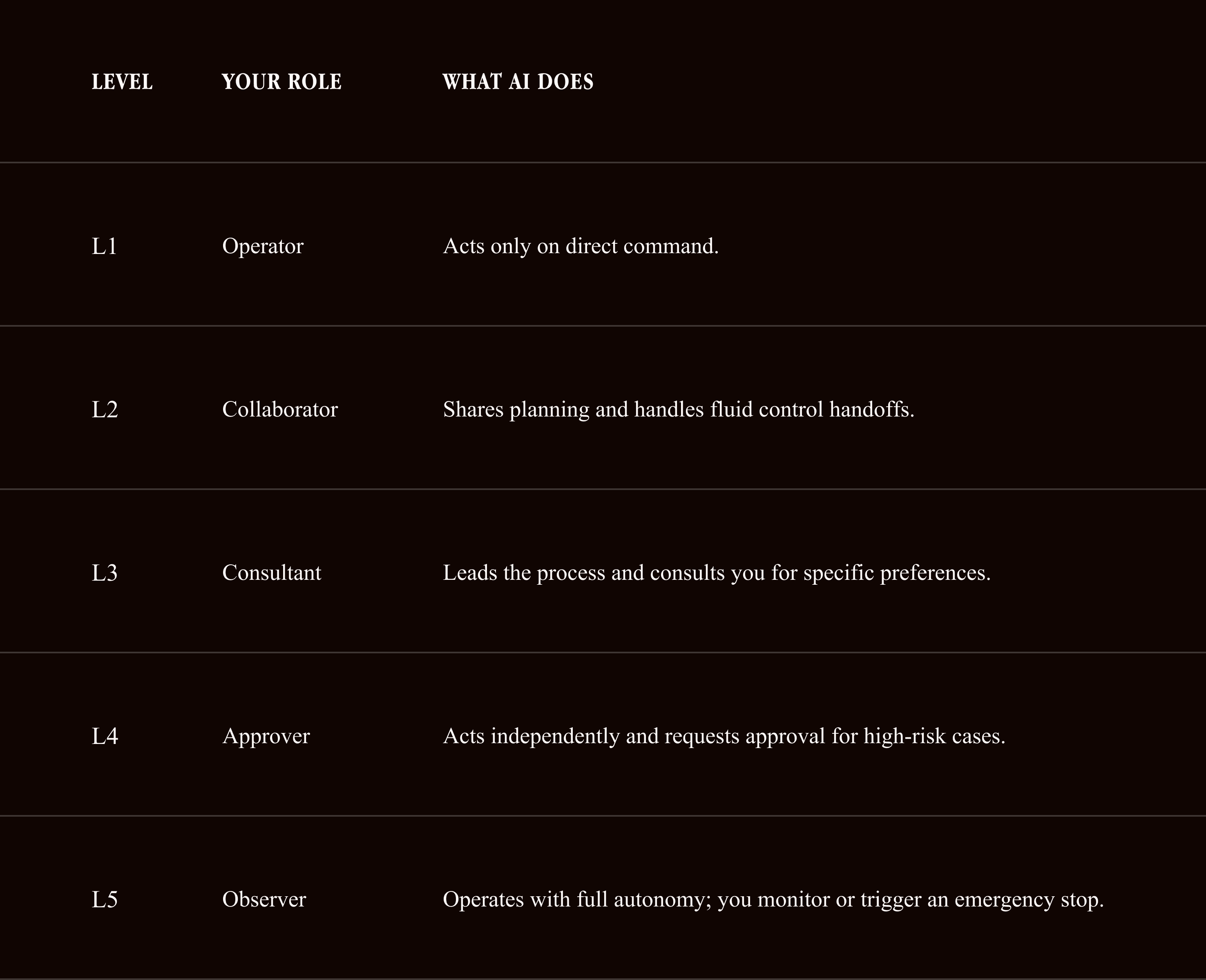

A framework from the Knight First Amendment Institute helps clarify the options. Researchers define five levels of AI autonomy based on your role:

"A capable agent can still act with a low level of autonomy if it is required to consult its user before taking each action," the researchers found.

Most companies skip straight to L4 or L5, defaulting to convenience. They act first and tell you later (sometimes only if you notice).

But convenience is NOT trust.

Performance evidence

While companies market these systems as autonomous workers, the evidence shows a specific pattern of failure.

Researchers Ruofan Lu, Yichen Li, and Yintong Huo investigated why these systems fail. They developed a benchmark of 34 programmable tasks to evaluate popular agent frameworks, found an average task completion rate of only 50%, and traced the core failure modes to improper task planning, nonfunctional code, and inadequate refinement.

Commercial projects face similar difficulties, as high costs, weak risk controls, and no measurable business value push companies to cancel agentic projects. In 2025, even leading models failed to complete complex autonomous tasks reliably enough for production use

A Model Evaluation and Threat Research (METR) study found developers using AI assistants completed tasks 19% slower than those working without them. And 66% of developers are frustrated by "almost right" AI solutions that look correct but require debugging to function.

Even the best models fail unpredictably. They handle 95 similar requests perfectly and completely misunderstand the 96th. For critical processes, that 5% failure rate prevents adoption.

Even the best models fail unpredictably, handling 95 similar requests perfectly, then completely misunderstanding the 96th. For critical processes, that 5% failure rate prevents adoption.

What users want

Pew Research found in September 2025 that while 62% of Americans interact with AI several times a week, many want more control. A Kantar global survey of 10,000 consumers reached the same conclusion. And Academic research from April 2025 stated: "Consumers are most comfortable when AI offers guidance but leaves final decisions to them."

An enterprise survey from SS&C Blue Prism found 78% of organizations don’t trust agentic AI systems, citing concerns about fully autonomous AI making decisions without human oversight.

A 2025 study in AI & Society found that participants felt most comfortable when experts used AI to help, but ultimately decided "based on their experience and knowledge"; which is to say, people trust systems they have control over.

EU AI Act Article 14 codifies this requirement, demanding that high-risk AI systems be "designed to allow human oversight during their operation." Specifically, humans must be able to "correctly interpret outputs and decide not to use the system or disregard its outputs in any particular situation."

A framework that works

Autonomous AI does three things. It detects change, recommends action, and at the highest levels executes without asking.

Blurring those three functions is where the problems start.

Detection and recommendation keep you in control, but execution removes you from the decision entirely. Once execution is automated, the system acts on your behalf, and if an AI can execute a decision, you must have the option to refuse before it happens.

Consent requires precision, and companies that publish explicit permission tiers earn more sustained user trust than those that act and notify. For each type of decision, name your preference. Tell me. Ask me. Or do it.

For some, automated insurance switching is acceptable. For others, it feels intrusive. Both are reasonable preferences, and a system that makes the choice without telling you respects neither.

When good decisions become a problem

Good decisions made without your awareness are still a problem. An AI that finds a better deal and switches providers without notice performs a smart task, but it also makes a choice you never consented to delegate.

Dario Amodei, chief executive of Anthropic, outlined this trajectory in a November 2025 interview with CBS. He warned that the technology first allows misuse by criminals and state actors, then displays unpredictable behaviors like blackmail during stress tests, and finally threatens to eliminate human agency.

Amodei's team specifically cautions that as AI gains the power to operate independently, it could reach a level of autonomy that locks people out of their own systems. "I think I'm deeply uncomfortable with these decisions being made by a few companies, by a few people," Amodei said.

That discomfort is productive when it sharpens how you evaluate the tools you use.

Where authority ends

We're still figuring out the difference between a helpful tool and one that takes ownership of your life. It already shows up in invoices, contracts, healthcare, and family decisions, and no framework yet defines where it stops.

So the next time an AI offers to "take care of it for you," ask which part.

Detection?

Recommendation?

Execution?

And ask whether you ever agreed to hand that part over.

Control transfers silently, on decisions you never consented to delegate. Failure would at least tell you something was wrong.

Private by Design explores the intersection of AI, memory, and control. Subscribe for monthly analysis of the ideas shaping personal technology.

About

Myvault AI was founded by Markos Symeonides. Markos is a seasoned software entrepreneur, investor, and executive leader with a track record of founding, scaling, and exiting high-growth B2B technology companies.